In January, David Broockman, then a political science Ph.D. student at UC Berkeley, found something unusual about a study he and fellow student Joshua Kalla were trying to replicate. The data in the original study, collected by UCLA grad student Michael LaCour and published in Science last December, had shown that gay canvassers, sent door-to-door in California neighborhoods, could, after a brief conversation about marriage equality in which the canvassers disclosed their own sexual orientation, have a lasting impact on voter attitudes on the subject.

The study had “irregularities,” Broockman and Kalla found. For one thing, the data were far less noisy than usual for a survey. What’s more, the researchers weren’t getting anywhere near the survey response LaCour had reported. When they called the survey company involved, it responded that the study was beyond the company’s capabilities. No such study, it seemed, had been done.

In May, Broockman, Kalla, and fellow researcher Peter Aranow aired their suspicions in a 27-page report. Nine days later, Science issued a retraction, causing a minor uproar in academia and embarrassing those in the popular media who had trumpeted the findings without skepticism.

Radio host Ira Glass was one of them. He had featured the study in a segment on his popular program, This American Life. In a subsequent blog post on the show’s website, Glass explained to his listeners, “We did the story because there was solid scientific data … proving that the canvassers were really having an effect…. Our original story was based on what was known at the time. Obviously the facts have changed.”

More accurately, the facts had never really been facts at all.

1998

Year in which the British medical journal The Lancet published a study suggesting a link between autism and vaccines.

Glass’s acknowledgment underscores a pair of common foibles in journalism: first, a tendency to rely on, and emphasize, the results of a single study; and second, the abdication of skepticism in the face of seemingly “solid scientific data.” But it’s not just journalism. Even other social scientists, when asked why they hadn’t questioned the counterintuitive results, pointed to their trust in the authority of Donald Green, the respected Columbia professor who coauthored the original paper. (Green, for his part, appears to have been as unpleasantly surprised as anyone and promptly asked Science for the retraction.)

2010

Year The Lancet published a retraction of the discredited study.

It’s probably also true that many in the media and academia simply wanted to believe the findings. Broockman and Kalla were trying to replicate LaCour’s findings, not debunk them. They had admired the study, and remembered that their first instinct wasn’t to suspect LaCour but simply to marvel at the strangeness of his results.

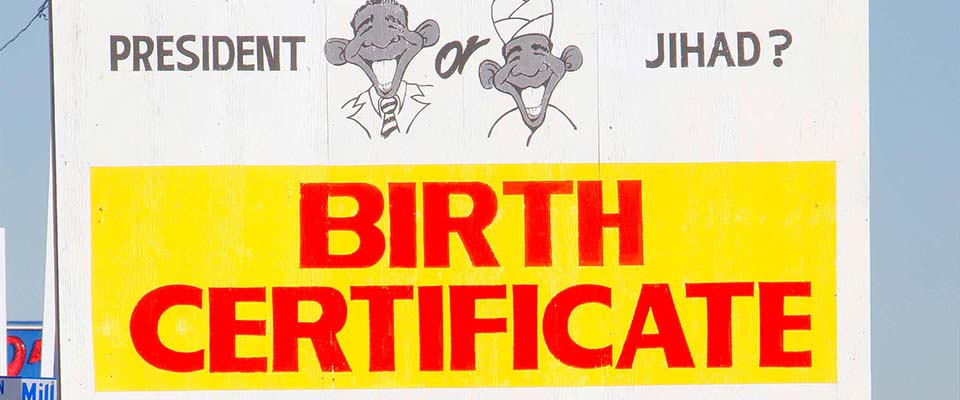

33

Percentage of American parents surveyed by The National Consumers League in 2014 who believe vaccines are linked to autism.*

The LaCour affair is just one entry in a series of embarrassing revelations that call into question the reliability of published science. One need only consider the steady parade of contradictory health claims in the news: soy, coffee, olive oil, chocolate, red wine—all have been promoted as “miracle foods” based on scientific studies, only to be reported as health risks based on others. Perhaps the most notable hit to science, however, was a recent large-scale attempt to replicate 100 published psychology studies, fewer than half of which could actually be re-created. In fact, just 39 percent. No one is suggesting that the other 61 studies, or even a small percentage of them, were falsified. It’s just that, in the words of the study itself (also published in Science), “Scientific claims should not gain credence because of the status or authority of their originator but by the replicability of their supporting evidence. Even research of exemplary quality may have irreproducible empirical findings because of random or systematic error.”

In other words, just because it’s published doesn’t mean it’s right.

Science and journalism seem to be uniquely incompatible: Where journalism favors neat story arcs, science progresses jerkily, with false starts and misdirections in a long, uneven path to the truth—or at least to scientific consensus. The types of stories that reporters choose to pursue can also be a problem, says Peter Aldhous, a teacher of investigative reporting at UC Santa Cruz’s Science Communication Program, lecturer at Berkeley’s Graduate School of Journalism, and science reporter for BuzzFeed. “As journalists, we tend to gravitate to the counterintuitive, the surprising, the man-bites-dog story,” he explains. “In science, that can lead us into highlighting stuff that’s less likely to be correct.” If a finding is surprising or anomalous, in other words, there’s a good chance that it’s wrong.

On the flip side, when good findings do get published, they’re often not as earth shattering as a writer might hope. “A lot of basic research to a layperson is kind of boring,” says Robert MacCoun, a former Berkeley public policy professor now at Stanford Law School. “I would have real trouble trying to explain at a party to someone why they should really be fascinated by it.” So journalists and their editors might spice up a study’s findings a bit, stick the caveats at the end, and write an eye-catching, snappy headline—not necessarily with the intent to mislead, but making it that much more likely for readers to misinterpret the results.

That’s something science journalists have to guard against, Aldhous says. “I’m not arguing for dull headlines, I’m not arguing for endless caveats. But maybe what we should be aiming for is a little bit more discretion and skepticism in the stories we choose to cover.”

Journalism’s driving pressure, and the source of many of its shortcomings, is time. News proceeds apace, and journalists have to scramble to stay on top of the news cycle. Sometimes, in the rush to beat deadlines, accuracy falls by the wayside. So why not slow down and make sure all the facts are correct?

“The problem is, you’ve got to realize the way science works—that the new exciting thing possibly, or even probably, in the fullness of time, isn’t going to be right.”

Taking the time to vet the science underlying a finding can take forever, Aldhous says. “If you’re going to challenge everything and inspect people’s data on everything you cover, you’re probably not going to have a job at many leading news organizations for very long.” Aldhous himself once exposed a researcher who had falsified stem cell images in a lab at the University of Minnesota. Two of the lab’s papers were eventually retracted, but the story required years of assiduous reporting. “It takes an enormous amount of time if you’re really getting into that level of detail,” Aldhous says.

Underlying all this is what he sees as a fundamental tension at the core of science journalism. “In news, you gotta report the new exciting thing,” he says. “The problem is, you’ve got to realize the way science works—that the new exciting thing possibly, or even probably, in the fullness of time, isn’t going to be right.”

Beyond the inherent difficulties of covering science, science journalism is also heavily influenced by science PR. It isn’t a new concern. In a 1993 article, Chicago Tribune writer John Crewdson wondered whether science writers who simply accepted press releases from science organizations weren’t acting as “perky cheerleaders” as opposed to watchdog reporters. The concern was borne out by a 2014 British Medical Journal study which found, not surprisingly, that the more university press releases exaggerated results, the more inaccurate the resulting news articles turned out to be.

“Scientists are supposed to be the ultimate skeptics, and journalists covering science should be ultra-skeptics,” Aldhous says. “We should question everything.” Moving forward, he says, science journalists should cover their beat in a way that’s less focused on “what the press releases from Nature tell us is new and interesting, and look perhaps a little bit more at how this stuff is panning out.”

Eschewing the ready supply of stories would be a big cultural shift. But if it happened, he says, “it would lead to more interesting, more societally relevant” coverage.

Better journalism is at best half the solution, though. LaCour’s fabricated data weren’t ginned up by the media, after all—they originated from the researcher himself. And while straight-up fraud is rare, academia isn’t exactly rewarding the practice of good science.

MacCoun, the public policy professor, says the expectations for researchers just entering the job market are tougher than ever. “You have some of the top candidates coming out of graduate school with as many publications as it used to take to get tenure as an assistant professor,” he says. “It’s just an arms race.” Rather than the blatant fabrication of data, he says, “a much bigger problem is that people who aren’t trying to commit fraud are unwittingly engaged in bias because they are just cranking out study after study after study, and only publishing the ones that work.”

If you ask Michael Eisen, a Berkeley biologist and an outspoken critic of the scientific journal system, the quantity of papers isn’t the only problem. One reason LaCour’s paper got so much buzz was that it was published in Science, a high-profile, high-impact journal with an extremely low acceptance rate. To many university hiring committees, Eisen argues, that’s a convincing enough proxy for important, trustworthy research.

In the beginning, he explains, journals were simply a way to relate the proceedings of meetings of small groups of amateur scientists. But as science became a profession and institutions formed, journals began vetting the results of studies and, due to limited space, only published what they found most interesting. The selection process, fraught with subjectivity and prey to the whims of scientific trends, tends to ignore the integrity of experimental methods in favor of eye-catching results, says Eisen. “The journals were created to serve science, but now they’re doing the opposite—it’s the journals that are driving science rather than communicating it.”

As with the mainstream science media, the science journals are looking for big, game-changing discoveries, not the slow, unglamorous march of scientific progress.

“There’s this great phrase of ‘doing the barnacles,’” Eisen explains. After Charles Darwin returned from his voyage on the Beagle, he spent eight painstaking years identifying, classifying, describing, and drawing barnacles, producing sketches and observations that marine biologists still use today. No one wants to do the barnacles anymore, Eisen says, because it takes forever, it’s hard, and it’s so poorly rewarded by the system. And while meticulous barnacle-type work is the bedrock of good science, scientific journals’ constant need for gee-whiz results actively encourages slapdash research.

“You expect that for every hundreds of experiments you do, you’ll get one really amazing one,” Eisen points out. “But when you’re not rigorous, you get the wrong result, and if most things are destined to be wrong or uninteresting, then if you do them poorly you’re more likely to have interesting results than if you do them well.” (And, remember, those are the studies that are more likely to get written up in the news!)

10

Factor by which retraction notices in scientific journals increased between 2000 and 2010.

For example: In 2010, scientists announced that they had discovered arsenic in DNA—results that were, again, published in Science. “This was a very sexy paper,” Eisen recalls. “But it was just totally wrong. It took about an hour after it was published before people started shooting holes in it, and ultimately people tested the idea and it all turned out to be wrong.” That never would’ve happened, he says, if the journal had rigorously reviewed the paper without an eye to how alluring the finding was.

44

Percentage of retractions attributed to “misconduct,” including fabrication and plagiarism.*

MacCoun agrees with the basic analysis. “We’ve unintentionally created incentives that encourage bias,” he claims, “and we need to adjust incentives so we can encourage and reward quality control.”

The push to publish for the sake of publishing has some unfortunate side effects. Studies that don’t yield any new relationships—null results—are just as important to science as findings that discover something new. But Stanford political scientist Neil Malhotra found that studies that yielded strong, positive results were 60 percent more likely to be written up than were null results. And, MacCoun says, “we’re beginning to realize that … researchers are only telling us about the findings that are significant, and that the true error rates are a lot higher than the published error rates.”

In an article for the statistically minded website FiveThirtyEight, science writer Christie Aschwanden introduced readers to a common practice called p-hacking, whereby researchers can tweak multivariable social science data to produce results that are “statistically significant,” though quite possibly false. Statistical significance as determined by a low-enough “p-value”—that is, the likelihood of getting a certain result if your hypothesis were false—is the ticket to getting results published. The conventional threshold for statistical significance is a p-value of 0.05. But let’s say you arrive at a p-value of 0.06. Do you give up on your hunch? Or do you tweak a few variables until you nudge that p-value down a skosh?

The practice isn’t necessarily an indictment of social scientists—instead, it tells us just how difficult deriving signal from noisy data actually is. Tellingly, the title of Aschwanden’s piece is “Science Isn’t Broken: It’s just a hell of a lot harder than we give it credit for.” Add to that difficulty the simple matter of human nature and our tendency to interpret data in ways that confirm our existing beliefs—what psychologists call “confirmation bias”—and you have what appears to be a baked-in flaw in scientific research.

“If I had to point to any single thing that would dominate these incorrect papers in the literature, it’s [confirmation bias],” Eisen says.

That doesn’t mean scientists are cheating—just that they’re human like the rest of us. “I think it’s unconscious,” MacCoun says. “I think people are acting with the very best of intentions, that they don’t realize they’re doing it.”

Since confirmation bias is such a well documented, pervasive part of our psychology, Eisen feels the systems of science should be designed to thwart it at every turn. “When you have an idea in the lab and you think it’s right, your first and only goal should be to prove yourself wrong…. You have to train people to think that way. You have to run your lab and do your own research in a way that recognizes your intrinsic tendency to believe in things you think are true. And the way we assess science has to be designed to catch that kind of stuff.”

One way to do that was put forward in a recent commentary in Nature by MacCoun and Nobel Prize–winning Berkeley physicist Saul Perlmutter, who suggested that scientists deal with confirmation bias through “blind analysis.” In blind analysis, a study’s data are intentionally distorted—say, by switching the labels on some parameters—until the analysis is finished. That way, MacCoun explains, researchers make decisions not knowing whether those decisions will help their hypothesis or hurt it, “which means you have to make decisions on the merits of whether it’s good data analysis.”

44

Percentage of health care journalists who said, in a 2009 survey, that their organization sometimes or frequently reported stories based only on news releases.*

Although Perlmutter and MacCoun urge more scientists to adopt blind analysis, it’s unlikely to happen en masse. That’s too bad, because our faith in science—our best tool for understanding the world and a critical one for our survival as a species—is at risk. “I think the stakes are high for scientific credibility,” MacCoun says. “Expertise is something you have to earn…. It’s our methods that make us trustworthy, not our degrees or our pedigrees.”

But given all the unresolved weaknesses and uncertainties in the enterprise, how should we regard science going forward? David Christian is a historian at Australia’s Macquarie University and author of Maps of Time, the seminal text of Big History—that is, the story of the entire universe, from the Big Bang to the present, based on the best scientific knowledge available. Christian views science, like history itself, as a useful but imperfect window to reality.

“The universe is big, our minds are small; we’re never going to get absolute certainty,” he says. So we need to strike a somewhat sophisticated epistemological stance in our approach to the world, Christian says, one where “you’re constantly aware of testing claims about truth, but you’re not naïvely cynical or naïvely trusting.”

Science’s answers are always provisional, of course, but to reject science totally would be dangerous and also slightly hypocritical, Christian points out. After all, he says, “Every time someone climbs aboard a plane, they’re putting a lot of trust in science.”

Chelsea Leu is a Berkeley-based freelance writer and former California intern. Her work has also appeared in Bay Nature, Sierra, and Wired.

*Sources: National Consumers League and autism; Nature news item on increase in retractions and percentage due to misconduct; Kaiser Family Foundation/Association of Health Care Journalists on health reporters and press releases.