The Acxiom Corporation has me down as Arab.

Acxiom is a commercial data broker based in Little Rock, Arkansas, a Big Data company that builds consumer profiles by aggregating information from various public and consumer databases. It then packages and sells that information to marketers who want to more accurately target ads to consumers. One of Acxiom’s slogans is “Stop Guessing. Start Knowing.”

Under pressure from lawmakers and privacy advocates to be more transparent, the company recently allowed consumers a glimpse of their personal profiles. Logging in to aboutthedata.com, I discovered that Acxiom knows my birthday, my street address, the last four digits of my social security number, and even when my auto insurance expires.

The Arab thing is a total guess, though: Ethnicity based on surname.

“Who knows why people do what they do? The point is, they do it, and we can track and measure it with unprecedented fidelity.”

For better or worse, we have now entered the Age of Big Data. The nebulous term refers not just to the unprecedented scale of data (including our own personal data) and computing power now available to marketers, governments, researchers, and the like, but also to an emerging mindset that sees nearly every question facing humanity as suddenly susceptible to computational analysis.

The idea found early expression in an influential 2008 essay in Wired magazine called “The End of Theory.” Chris Anderson, then editor of Wired, announced to readers that we now lived in a “world where massive amounts of data and applied mathematics replace every other tool that might be brought to bear. Out with every theory of human behavior, from linguistics to sociology. Forget taxonomy, ontology, and psychology. Who knows why people do what they do? The point is, they do it, and we can track and measure it with unprecedented fidelity. With enough data,” he concluded, “the numbers speak for themselves.”

The thread is picked up in a new book called Big Data: A Revolution That Will Transform How We Live, Work, and Think. The authors, Kenneth Cukier and Viktor Mayer-Schönberger, argue that “the change in scale has led to a change in state. The quantitative change has led to a qualitative one.” In the brave new world of Big Data, they contend, “society will need to shed some of its obsession for causality in exchange for simple correlations: not knowing why, but only what.” 1

The arguments are provocative, but the underlying logic leads in a disturbing direction. After all, mere correlation may be perfectly adequate when it comes to targeting advertising to consumers. What happens, though, when algorithms are used to, say, target terror suspects for drone attacks? In other words, when merely exhibiting terrorist-like behavior is enough to bring death down from the sky? The question is not entirely hypothetical. According to many news accounts, this is already common procedure. The Pentagon reportedly calls such attacks “signature strikes.” Really. You can Google it. 2

Anderson did not use the term Big Data, which has only recently come into vogue; rather, he referred to The Petabyte Age. Many computers now come equipped with terabyte hard drives. A petabyte is 1,000 times larger—1015 bytes. Imagine DVDs filled with data stacked 55 stories high. When you move to data on the scale of petabytes, you are generally no longer talking about information stored locally, but dispersed across the network in the so-called cloud.

In 2003, years before the notion of cloud computing became common currency, UC Berkeley School of Information professors Peter Lyman and Hal Varian (now chief economist at Google) took on the unprecedented task of measuring the world’s data output. They estimated that 5 exabytes (5×1018 bytes) of new information were created in 2002 alone, roughly equivalent to the amount of information (if digitized) held in the book collections of 37,000 Libraries of Congress. They also found that the creation of information was increasing at a rate in excess of 30 percent per annum, or doubling every three years.

Professor Lyman, who died in 2007 at age 66, said the purpose of the study was “to quantify people’s feelings of being overwhelmed by information.”

To be sure, that sense of drowning in data is one of the more salient characteristics of our era. We feel deluged by the endless Friend requests, the never-ending stream of emails and text messages, the ceaseless barrage of spam and phishing scams. On top of that, there’s all the news we can’t keep up with, the books we don’t have time to read, the movies we’ll never see. Some people talk as though they themselves were wired to the network; they say they “haven’t got the bandwidth.” Others compare it to drinking water from a fire hose.

Futurist Alvin Toffler popularized the term “information overload” in the 1970s, but the lament is as old as the Bible. I hear it echoing in my ears whenever I walk into Moe’s Books or the Doe Library: “Of making many books there is no end.” That goes more than double for blog posts and tweets and—God help us—Buzzfeed lists, the dreaded “listicles.” With apologies to Ecclesiastes, the current info glut really is something new under the Sun.

How did we get here? Like the story of the cosmos, the story of our digital universe begins with a bang—a big one.

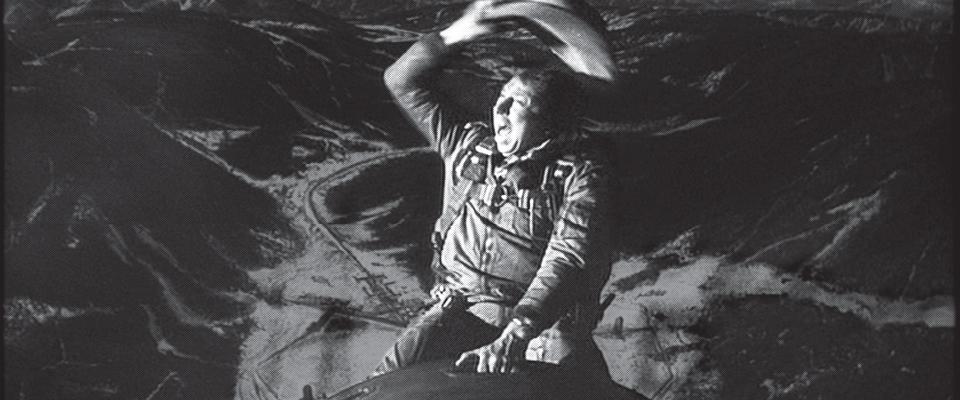

MANIAC3—the first digital electronic stored-program computer, granddaddy to every PC in existence today—was designed specifically to run the calculations necessary to build the first hydrogen bomb. Dubbed “Ivy Mike,” the weapon was tested over the island of Elugelab on November 1, 1952. (Don’t bother looking for Elugelab in your atlas. The H-bomb, roughly 1,000 times more explosive than the devices dropped on Hiroshima and Nagasaki, wiped the innocent atoll off the map.)

Science historian George Dyson tells the story in his new book, Turing’s Cathedral. As part of this year’s On the Same Page program, which annually endeavors to create a shared intellectual experience for Berkeley students, the University purchased 8,000 copies of Dyson’s book and gave one to every incoming freshman, transfer student, and faculty member. 4

As you may have guessed, this is only marginally a story about weapons of mass destruction. As awesome as the H-bomb was, its consequences may ultimately pale in comparison to those of the computer that made it possible. “It was the computers that exploded, not the bombs,” Dyson writes. (Call it the I-bomb, or better yet, the iBomb.) Indeed, even as the Cold War recedes into history, the shock waves of the digital revolution continue to spread, ramifying in all directions, with greater and greater speed.

The engine of this acceleration is described by Moore’s Law, which is not really a law so much as an astoundingly accurate prediction. Intel cofounder and Berkeley alum Gordon Moore made the offhand observation in 1965 that the number of transistors on integrated circuits was doubling every two years and would continue to do so for the foreseeable future. And so it has (growing even faster, doubling every 18 months or so), with the concomitant effect that computing has become not only faster but cheaper. In that way, Moore’s Law also helps to explain why most of our electronic gadgets seem to obsolesce overnight.

“People tend to view the last 50 years, starting with the birth of computing, as the Information Age. What’s clear is, we ain’t seen nothin’ yet; that in some sense that was just building the plumbing for the Information Age.”

The effects of the information explosion on the University are increasingly evident, practically encoded on the place in an alphabet soup of acronyms and letter names, from well-established departments such as EECS (Electrical Engineering and Computer Sciences), to relatively new multidisciplinary institutes such as CITRIS (Center for Information Technology Research in the Interest of Society); from the newly inaugurated D-Lab (Social Sciences Data Laboratory) to the nearly two-decade-old I-school (School of Information). It is evident, too, in the proliferation of new branches of research, from ecoinformatics to econometrics, from genomics to computational chemistry. (The 2013 Nobel Prize in Chemistry was awarded for work done on computer models, not in traditional chemistry labs.)

The watchword around campus now, much favored over Big Data, which is looked upon as hype-fueled and faddish, is “data-driven discovery.” Faculty members also speak of bringing a “computational lens” to research problems. The idea is applicable not just to the so-called hard sciences, but to the social sciences and humanities as well, where improved text-analysis software and new data-visualization techniques are proving to be powerful tools.

Berkeley historian and associate dean of social sciences Cathryn Carson has been the point person in the formation of D-Lab, a new Berkeley initiative aimed at fostering cutting-edge data-intensive research in the social sciences. I met with her recently at Barrows Hall, in D-Lab’s newly carved-out space that features an inviting, lounge-like area called The Collaboratory. Here students and staff can team up to engage with their research data in what Carson described as “qualitatively new ways as more and more data streams become available.”

As the data flows, so too does the money. In the last two years alone, Berkeley has launched several new data-science efforts, all backed by considerable capital infusions from government, foundations, and private industry. These include the Scalable Data Management, Analysis, and Visualization (SDAV) institute at Berkeley Lab, supported by a $25 million award from the Department of Energy; The Algorithms, Machines and People (AMP) Lab (see “Wrangling Big Data”), which launched with a $10 million National Science Foundation seed grant that has since been buttressed by $10 million from industry and another $5 million from the Department of Defense; and the Simons Institute for the Theory of Computing, which was funded by a $60 million grant from the Simons Foundation and which claims Google as a corporate partner. Finally, there is the Berkeley Institute for Data Science (BIDS), an interdisciplinary effort to be centrally located in Doe Library and headed by Berkeley astrophysicist and Nobel laureate Saul Perlmutter. BIDS was formally announced just as this magazine was going to press. It is backed by a $37.8 million grant from the Gordon and Betty Moore and Alfred P. Sloane Foundations, to be shared with two other schools.

This burst in institutional growth would be impressive for any era, but for a period of postrecession malaise and tight budgets, it’s nothing short of phenomenal.

Professor David Culler, the computer scientist who chairs EECS, sees the developments as part of the latest phase in the evolution of Berkeley, from its earliest beginnings as a Gold Rush school built around mineralogy and mining engineering, to one that is increasingly being organized around “data in a globally connected world.”

The time is ripe, thinks Culler. “People tend to view the last 50 years, starting with the birth of computing, as the Information Age. What’s clear is, we ain’t seen nothin’ yet; that in some sense that was just building the plumbing for the Information Age. And of course if you think about it, the Industrial Revolution begins around 1865, the Civil War, but it didn’t really transform society until the early 1900s. It’s not too surprising that the second phase is far more transformative than when the plumbing is going in.”

Data and information are not the same thing, of course. Data is raw, information is refined. It’s the difference between crude oil and gasoline. To extend the metaphor, neither is an end in itself but rather the fuel to propel us to our destination: Wisdom or, at least, Knowledge (although no doubt many students in these cash-strapped times would settle for Gainful Employment).

In an interview before he died, Peter Lyman drove home a similar distinction, between information and meaning. Referring to the aforementioned study he conducted with I-school colleague Hal Varian, Lyman said: “All we tried to measure in figuring out how much information is produced was how much storage it would take to hold it all. What we didn’t address is what makes information valuable…. There’s a real gap between the amount of information we store and the amount of information we know how to use.… So in a sense, most of it is noise.”

Filtering the signal from the noise is the principle challenge for all Big Data enterprises, whether it’s astronomers searching for supernovae in raster scans of the night sky, or agents at the NSA listening for whispered hints of terror plots in the vastness of global communications. Big Data is just the haystack; we still have to find the needle. Scratch that. Increasingly, it’s our computers, running what are called inferential algorithms, that have to find it. Our job now is to verify that what is found is, in fact, a needle and not, say, a pin, or a nail, or a piece of hay.

Cutting through the noise is also our challenge as individuals.

“We’ve changed from the world of clay sculpture, in which you add knowledge by learning something, to a world in which literacy consists of knowing what to throw away. It’s like marble sculpture now; throw away the right bits and you have art.”

On the personal level, Lyman felt the problem of information overload required a new kind of literacy, which he described as “an economy of attention, … filtering, choosing, selecting, customizing.” It wasn’t always thus. “We’ve changed from the world of clay sculpture, in which you add knowledge by learning something, to a world in which literacy consists of knowing what to throw away. It’s like marble sculpture now; throw away the right bits and you have art.”

David Culler sees a similar dynamic playing out in terms of learning. “If you think about how we learn,” he said, “we’re still of the generation that builds the pyramid from the bottom up. You start with the facts and tools and techniques, and then you build up on that and become more specialized. Increasingly, how young people learn is by building from the top down, because now you can take an interest in something and, given that the world’s information is accessible, you can sort of learn a little bit from Wikipedia or whatnot until you’ve grown enough of a foundation that you understand what you’ve learned at the top.” 5

Partly for this reason, many educators now advocate “flipping the classroom”; that is, having students watch lectures at home and do homework in class, where the instructor can offer personalized assistance.

Despite the fact that we all are consumers of information, few of us spend much time thinking about it, and fair enough—as a subject, it is almost too obvious to be noticed. Reading this, for example, you do not stop to consider that I am communicating to you via an ancient code consisting of arcane arrangements of 26 letters. Likewise, in writing it, I do not pause to consider the word processing software that enables me to type this on a computer and to do useful things like backspace, copy, save as, and all the rest.

The Information: A History, a Theory, a Flood. As if beckoning readers down the rabbit hole, Gleick writes, “Increasingly, the physicists and the information theorists are one and the same. The bit is a fundamental particle of a different sort: not just tiny, but abstract—a binary digit, a flip-flop, a yes-or-no. It is insubstantial, yet as scientists finally come to understand information, they wonder whether it may be primary: more fundamental than matter itself.”

Or, “It’s bits all the way down,” as Berkeley linguist Geoffrey Nunberg somewhat mockingly summarized in what was nevertheless a mostly positive review of Gleick’s book for The New York Times. 6

Nunberg is a professor at Berkeley’s School of Information. When I first began thinking about this topic, I sat down with him and his I-school colleagues, Paul Duguid and sociologist Coye Cheshire. If there was a theme to that conversation, it was that the future of information technology is too important to leave to the engineers.

They used Google to illustrate the point.

“Google is a place where, by virtue of being a religion, the solution to technological problems is to throw more technology at it,” Nunberg said. “So when they come to do Google Books, they build a tool where you can just, in Paul’s phrase, ‘barrel in sideways.’ You can get at all this information just by throwing search terms at it, and you don’t have to know about where the book was published, or all that metadata that’s in the card catalog.”

But the metadata—which includes details about editions, publishers, translations, and so on—is crucial, insists Nunberg. “Even now,” he said, “if you look in Google for books published before 1960 that have the word Internet mentioned, there are 400 of them. It’s a train wreck—25 percent of their books are misdated, by their own figures.”

Duguid added, “One of the things that Geoff and I find people go ballistic over is that we criticize Google Books. But they’re not going to get it right unless they understand how they’ve gotten it wrong. And it takes many different disciplines. We have a team of computer science graduates to take on Big Data. And you need them—but they alone aren’t going to be able to say what the data can tell you and what it can’t.” In other words, contrary to Chris Anderson, the data does not “speak for itself.”

It was Coye Cheshire who first broached Anderson’s Wired essay in our discussion, holding it up as an example of the kind of technological determinism that he finds both common and wrongheaded. To illustrate, Nunberg brought up a picture he found in a Popular Mechanics from 1950 showing the Home of the Future. “There’s this woman hosing down her couch, and the caption reads, ‘Because all of the furniture in her house is waterproof, the housewife of the Year 2000 does her cleaning with a hose.’ And there’s a drain under the floor.”

The technological determinists inevitably get it wrong, Nunberg said, because they “take whatever the newest technology is and just project it out, driving everything else off the table—natural fibers and so on—out of here. But the other interesting thing is the tendency to naturalize and universalize these very contingent social categories: to assume, for example, that 50 years later that there would still be housewives. The idea that there’s this huge social custard that really determines how technology is going to be deployed, they just can’t imagine it.”

To be sure, predicting the future is a fool’s game. But some of us are more foolish than others. As shown by Philip Tetlock, a psychologist and former Haas School of Business professor, in his 2005 book Expert Political Judgment, most political pundits are routinely wrong. Done in by a mix of overconfidence and black-and-white thinking, their prognostications are little better than random guesses. Tetlock labeled such people “hedgehogs” and contrasted them with more cautious, nuanced thinkers, whom he called “foxes.” Tetlock found that foxes are significantly better forecasters than hedgehogs, but the latter get all the attention.

Chris Anderson is a classic hedgehog. In my travels around campus gathering material and interviewing sources for this story, I’ve encountered mostly foxes.

There have been exceptions. Perhaps my closest brushes with techno-utopianism came at a forum held at CITRIS. I’m thinking of the chief information officer for the City of Palo Alto, who said “we’ll be in a world within in a few years where, when we think of something, we can do it.”

“Information science…is in the throes of a revolution whose societal repercussions will be enormous.” But will those repercussions be good or bad? “Exactly.”

Then there was the conference’s keynote speaker, Lieutenant Governor (and UC Regent) Gavin Newsom, whose new book Citizenville is a deep draught of Silicon Valley Kool-Aid. Newsom envisions techno-fixes for all of government’s ills. “In the private sector and in our personal lives, absolutely everything has changed over the last decade,” Newsom writes. “In government, very little has. Technology has rendered our current system of government irrelevant, so now government must turn to technology to fix itself.” (He was speaking prior to the calamitous rollout of healthcare.gov.)

On stage in Sutardja Dai Hall, the former mayor of San Francisco smugly warned his audience that new technologies and online competitors such as edX and Coursera would soon disrupt higher education’s economic model in much the same way that Craigslist upended newspapers, and digital photography killed film. UC regents’ meetings, he said, were “reminiscent of Kodak’s board of directors meetings…. Kodak, remember, they invented the digital camera. They invented it! And then it was the world they invented that competed against them, and they’re bankrupt.” He made it sound like a good thing.

More typical of the presentations I heard at CITRIS were talks like the one delivered by Microsoft Research’s Kate Crawford, at the I-school’s DataEDGE conference, called “The Myths of Big Data.” Among the fallacies Crawford chose to highlight was the idea that “Big Data makes cities smart.” While allowing that yes, used correctly, Big Data really can help us to run cities better and more efficiently, she also insisted it can take us only so far, largely because whole groups of people are inevitably left out—the poor and disenfranchised, for example, but potentially also those without smartphones or Twitter accounts. Ultimately, she said, Big Data is “only as good as the city officials who are using that data, analyzing it, and thinking about its limits.”

Then there’s Jaron Lanier. A virtual reality pioneer turned digital skeptic, Lanier is the author of You Are Not a Gadget: A Manifesto and, more recently, Who Owns the Future? In the latter, he takes a far less sanguine view of Kodak’s demise than Newsom did. He observed that, where Kodak once employed over 14,000 people, Instagram employed just 13 when it was snapped up by Facebook. “Where did all those jobs disappear?” Lanier wondered. “And what happened to the wealth that all those middle-class jobs created?”

Like the lieutenant governor, Lanier also warned that universities could go the way of newspapers. Unlike the lieutenant governor, he didn’t make it sound either good or inevitable.

For my final interview, I sat down with Dick Karp, director of the Simons Institute for the Theory of Computing, which is now the sole occupant of Melvin Calvin Laboratory. Entering the newly renovated circular building, I expected to be met by the hum of machines, the sight of people bent over keyboards, and network cables running through the architecture. In fact, the space is airy and light, dominated not by monitors but by whiteboards and comfortable, brightly colored furniture. Every weekday afternoon, the staff and fellows meet for teatime. On Tuesdays they have happy hour.

Similarly, I was mistaken in expecting to find Professor Karp to be somewhat intimidating. He is a towering figure in his field, after all, a National Medal of Science recipient and winner of the A.M. Turing Award, which is sometimes called “the Nobel Prize of computer science.” To my relief, I found Karp to be unpretentious, gracious with his time, even avuncular. When I prodded him about the institutional attitude toward Big Data, Karp smiled placidly and said, “We try to focus on the science, not the sizzle.”

I asked him whether I was right in thinking that computer science was coming into its own and whether all the sciences were in effect becoming information sciences. The answers were yes and yes. “For a long time,” he said, “computer science has been a tool, but not a full partner in scientific research. It’s changing now, and I think every aspect of human organization is potentially able to benefit from that.” He mentioned improved earthquake predictions and better models of how the brain works: “That’s a Big Data problem, par excellence.” But there are also risks going forward. “With more and more data, more and more hypotheses can be generated and, at that scale, statistical regularities may emerge that are bogus.”

Addressing the second part of my question, Karp went to his computer and read a quote from the biologist and Nobel laureate David Baltimore: “Some say biology is the science of the 21st century, but information science will provide the unity to all of the sciences. It will be like the physics of the 20th century.… Information science, the understanding of what constitutes information, how it is transmitted, encoded, and retrieved, is in the throes of a revolution whose societal repercussions will be enormous.”

When he finished reading, I asked, “But will those repercussions be good or bad?”

His answer was not really an answer. He said, “Exactly.”

Pat Joseph is the executive editor of California magazine. For the record, he’s mostly Irish and Portuguese, with a couple of other mongrel strains thrown in for good measure.

1Reading this, I suspect many fans of the late comic novelist Douglas Adams will recall the story of Deep Thought, the supercomputer in Adams’s classic sci-fi romp, The Hitchhiker’s Guide to the Galaxy. Asked to solve “the ultimate question of life, the universe and everything” Deep Thought labored for millions of years before returning with a very simple and totally unsatisfactory answer: 42 .return to article

2To the Mountain View company’s chagrin, “Google” is now treated as a transitive verb, and not just in English. All across Latin America, people employ the infinitive, “guglear,” to describe the act of searching for information online. In Sweden, they use it to describe a negative. They talk about “ogooglebar” or “the ungoogleable.” To say something is ungoogleable is to question its very existence. Citing trademark concerns and ever-protective of their brand, Google’s lawyers recently prevailed upon the Swedish Language Council to remove the neologism from its list of the Top 10 new words of 2012. Despite that measure, “ogooglebar” is still eminently googleable .return to article

3The acronym MANIAC stands for “mathematical and numerical integrator and computer,” and was adopted from the scientists who built the ENIAC, MANIAC’s less versatile predecessor. The ENIAC team called their machine MANIAC when it failed to function correctly. return to article

4George Dyson, whose father is the famous physicist, Freeman Dyson, and whose mother, Vera Huber-Dyson, taught mathematics at Berkeley, enrolled at Cal in 1969, at age 16, but quickly dropped out. For years afterward, he lived in a treehouse perched 90 feet up a Douglas fir tree on the coast of British Columbia, where he devoted himself to reviving the baidarka, an Aleutian-style kayak. That story is told in Kenneth Brower’s 1978 book The Starship and the Canoe. Asked recently why he dropped out of Berkeley, Dyson said he couldn’t stand the required reading. return to article

5I should confess here that I’ve assembled much of the information for this article in precisely this way and, that, often as not, my starting point was Wikipedia. No doubt this admission will arouse suspicions, maybe even disdain, in some readers. Perhaps that’s as it should be. As George Dyson’s father, Freeman Dyson, wrote: “Among my friends and acquaintances, everybody distrusts Wikipedia and everybody uses it. Distrust and productive use are not incompatible.” return to article

6The allusion is to the anecdote, popularized by physicist Stephen Hawking, about an astronomer who, at the end of a talk on cosmic origins is confronted by an elderly woman who informs him that he has it all wrong. The world rests on the back of a turtle, she insists. “Ah,” says the astronomer, “but what supports the turtle?” “You’re very clever, young man,” answers the woman. “Very clever. But it’s turtles all the way down.” return to article